What’s Our Job When the Machines Do Testing?

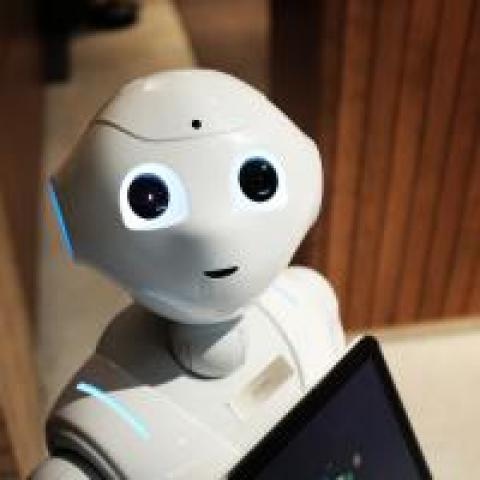

The application of analytics, artificial intelligence (AI), and machine learning are transforming jobs in industries once thought to be “safe” from automation.

Law firms are using AI-based machines to do the research that used to define the role of first-year lawyers. Trading decisions for our retirement accounts are managed by algorithmic robots crunching through massive amounts of historical data and real-time market metrics. The progress of the autonomous vehicle, underpinned by AI, is on the verge of replacing drivers across the transportation sector. What businesses in industry after industry have uncovered is this: Processes that have large amounts of data, a representative model, and a generally understood set of rules are candidates for automation.

Geoff Colvin, the author of Humans Are Underrated, points to the first-year lawyers described earlier as a model for those of us whose job consists of analysis, subtle interpretation, strategizing, and persuasion. These jobs are gradually—sometimes entirely—being transformed as increasing sets of tasks are delegated to a “smart assistant.”

Our job in software testing is also composed of many tasks. From the nuances of requirements elaboration, the back-and-forth in establishing “expected behavior,” test case analysis, and the other numerous cognitive tasks that we deal with, it’s a safe bet that our jobs won't be taken over by machines anytime soon. However, for the reasons cited by Colvin, those of us in the test industry would be wise to heed cross-industry applications of analytics and machine learning and begin staking out the proper role of the machine in our testing domain.

Jason Arbon published a test autonomy maturity model, assigning a measure of an organization’s automation capabilities. L0 (manual testing) is entirely in the mode of manual testing. L1 (scripted testing) has a firmly entrenched culture of scripting regression suites. L2 and above (exploratory bots, as well as human-directed, generative, and fully autonomous testing) are for organizations that have started to move into the realm of automating their cognitive and complex tasks.

For an L1 test organization well-versed in a world of automation, whether it be scripting of regression tests or the automation of build and deployment processes, analytics and machine learning represent the next generation of automation. And although it's just another tool to be considered for our testing toolbox, it's one that opens up the possibility of automating tasks in our job that we may never have imagined.

While many of the tasks across our diverse test practices are similar, each of our jobs has unique challenges, so priority will be determined by the specifics of our organizational context. So your job, when the machines can do testing, is to figure out what tasks you want them to do for you.

Whether your high-priority pain points include triaging a bounty of automated test failures, avoiding wasted effort researching a defect only to find it is a duplicate, rapidly predicting the root cause of a failure, deciding whether to automate or retire a test case, or increasing regression test coverage in a time-constrained period, there's a good chance that employing a machine as your smart assistant might be the next automation approach for you to consider.

Geoff Meyer is presenting the keynote What’s Our Job When the Machines Do Testing? at the STAREAST 2018 conference, April 29–May 4 in Orlando, FL.